Earth is Full. Why the Future of AI Training is in Orbit.

The grid is strained and water is scarce. How falling launch costs and state-of-the-art materials are making "Orbital Compute" the only logical next step.

Why are we going to space to train models?

One of the draw backs of making AI videos of cats doing pull-ups in gyms is that it takes a lot of energy to make one. With dreams of AGI on every tech bro’s mind, the biggest hurdle, it looks like, is hardware and not software. Some are calling it an “infrastructure crisis”. As foundational models scale exponentially, the demand for high-density compute is colliding with the hard physical constraints of utility grids, land availability, and cooling water resources.

If we can’t keep up with demand on earth, we are forced to look elsewhere - space. In fact, we’re already in the Orbital Compute Age with the launch of the Starcloud -1 pathfinder mission in November 2025 which trained a Google Gemma model on an orbiting Nvidia H100 as a proof-of-concept that Orbital Compute is indeed possible opening a new era where the bulk of AI training will probably migrate to the abundant sunlight and the cold vacuum of space.

Mach33 posts research papers and news about frontier technology with AI and datacenters, especially about Orbital Compute and Orbital Real Estate (yes, thats what they call them). Some experts are predicting fully functional model training infrastructure deployed and run completely in space. I’m not going to blame you for thinking this idea looks a lot like science fiction, and I agree, it does. But lets dig deeper and see what the experts are actually saying.

The 3 main pillars for the transition

According to researchers, there are 3 critical pillars supporting this transition from terrestrial compute to orbital compute.

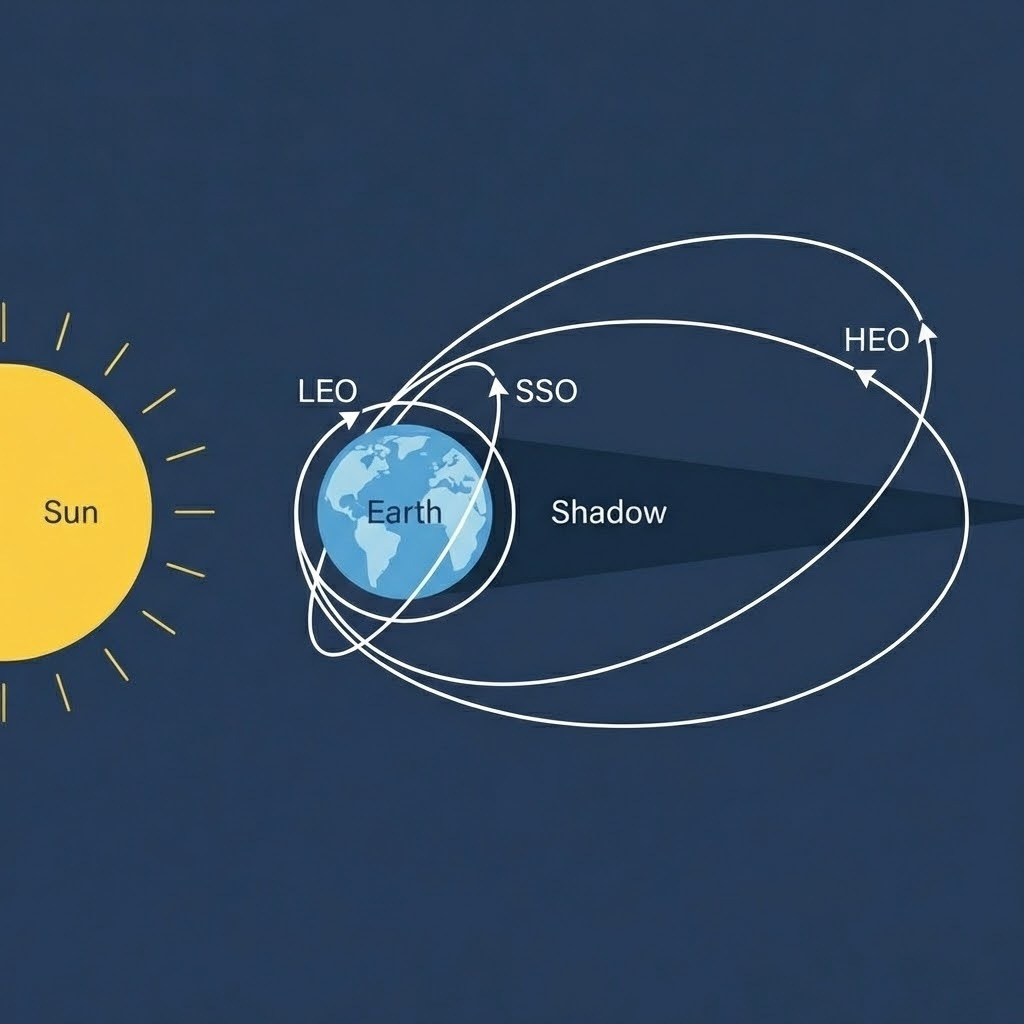

Orbital Real Estate: This market is segmented into specific regimes - Sun Synchronous Orbit (SSO) for near-term deployment due to its continuous solar exposure and High Earch Orbit (HEO) or Lagrange Point 1 (L1) for long-term, terawatt-scale training clusters.

The Energy Equation: Hardware costs are being rapidly offset by falling transport costs, driving the Orbital power costs down from the current $12 per wall for terrestrial systems to just $6 to $9 per Watt.

Thermodynamic Engineering: New research is overturning the historical misconception fo the “cooling constraint”, demonstrating that heat sinks in deep space offer superior thermal rejection capabilities for high-flux silicon (like the Nvidia H100 GPUs).

Lets explore each of these pillars in detail.

How much does it cost today?

The benchmark cost to deliver one Watt of power to the processor, excluding the compute hardware itself is, currently, $12 per Watt. The biggest cost drivers are -

Utility Solar Plants: The generation component costs $1.0 - $1.6/W

Grid and Substations: Interconnection costs range from $0.2 - $0.5/W

Mechanical and Electrical: This is the bulk of the cost and it includes switchgears, UPS (and batteries), cooling loops and so on, costs $7 - $12/W.

The last point involving cooling infrastruture is truly one of the biggest motivations to move the training to space. The junction temperatures of GPUs can reach roughly 95°C which pushes air cooling to its physical limits and hence we conume up to 4 million liters of water EVERY DAY, enough to supply 300,000 PER Data Center.

This is obviously unsustainablt. The trajectory then becomes clear - to keep up with demand, we need a completely radical cooling system and space has the answer.

Where exactly can we put the datacenters?

The fundamental economic advantage of space is the solar multiplier. Space-based solar panels operate in a vacuum eliminating atmospheric absorption, scatrtering and weather related inermittency.

Lets get into more details on those pillars we talked about earlier.

The orbital realestate is essential split into 3 categories -

Dawn-Dusk Sun Synchonous Orbit (SSO) - This is the near-term recommendation or “starter orbit”. In an SSO, the satellite’s orbital plane rotates at the same rateas the earth orbits the sun (about 0.98° per day). By selecting the correct inclination and an orbit of about 600-800kms, we can achive perpertual sunlight without ever dipping into earth’s shadow.

Moreover, since we’re technically in space, we get 8.2x cost-normalized solar index compared to earth which more or less eliminates the need for massive battery buffers.

However, there’s a huge limitation with selecting this orbit. Since we’re looking to aim for near 24hr sunlight, the specific orbital corridor is very narrow - roughly 1% of the Low Earth Orbit’s (LEO) usable volume. As more operators rush to claim these high-value orbits, congestion becomes a critical concern. Collision risk and debris mitigation strategies will eventually cap the scalability of this regime. We need to go higher for long-term scalability.

High Earth Orbit (HEO) - HEO offers more than 500x the volume of LEO, providing almost infinite room for expansion with satellites experincing very long periods of sunlight, almost 95% in most cases. The problem with this orbit is that the environment here exposes electronics and solar panels to intense, high energy partlces due to the orbit’s location on the Van Allen Radiation Belts. This means we need more shielding of the components to avoid asset degredation.

Sun-Earth (L1) - This is the “endgame” location for orbital compute. Located 1.5 million kilometers from Earth, L1 is a gravitational saddle point where a satellite can maintain a fixed position relative to the Sun and Earth. This offers a continuous, unshadowed solar access and unconstrained physical volume. There’s no radiation belts that warrant the need for more shielding. The only biggest issue with computing this far away from earth would be the latency, which to be honest, is going to be a factor for all these orbits.

Alright, what about the cooling then?

On Earth, data centers cool chips by pumping water over heat sinks. In space, heat must be rejected via radiation. The power radiated is proportional to the fourth power of the temperature.

Space has a background temperature of around 3 Kelvin. Which means, instead of rejecting heat into ambient environment temperature of 303-313K, space’s effective sink temperature is VASTLY lower than Earth’s. Maintaining the radiator at a high termperature (60-80°C) while facing a near absolute zero background creates a massive heat flux potential that is unmatched in terrestrial systems without active refrigeration.

The challenge then turns from heat to physics. Radiators are large structures. The way we measure effeciency is with Areal Density (kg/m²) - how heavy the radiator is per unit of cooling area. For context, current honeycomb radiators weigh ~19 kg/m².

Next generation radiators are proving to be vastly more effecient. With deployable fabric radiators, flexible loop heat pipes, and oscillating heat pipes are demonstrating densities of less than 6 kg/m².

Space cooling is a closed-loop, water-free process. Heat is rejected as infrared light, leaving zero thermal pollution and consuming zero water.

So whats stopping us?

It all comes down to the price. Reaching HEO requires significantly more energy than LEO. However, Starship’s on-orbit refueling capability flattens this cost curve. We’re waiting for the price of rocket launches to come down, especially Starship. Currently, the launch on a Falcon 9 costs about $2000/kg. Starship is poised to bring it down even more at appox. $100/kg which is a HUGE improvement. Considering just a few years ago, we were paying upwards of $10,000/kg, the launch costs are coming down rapidly and is projected to continue to do so opening up the possibility of Space Data Centers very soon.

Orbital compute is not only a necessity but also a relief valve for Earth’s digital industrialization. Paving thousands of acres of land, consuming millions of liters of water and straining the power grids is not a long term solution. At the very least, these space data centres are kickstarting a new revolution in material science, something that works not just on earth but anywhere in space.

P.S: I researched the topic by reading Mach33’s articles on their website. Huge thanks to the Mach33 team for sharing their knowledge.

Really enjoyed this perspective. It’s funny how we call it “the cloud” when AI actually runs on very real things: energy, water, chips, and Earth itself 😄

Makes me wonder whether we’re building AI to solve impossible human problems… or just to entertain ourselves and become even lazier. Probably both.

Refreshing and thoughtful read 👏